In an isolated system, the probability of any given state occurring is proportional to the number of ways that state can be reached.This tendency can be described using the concept of probability, which is a measure of the likelihood of an event occurring.

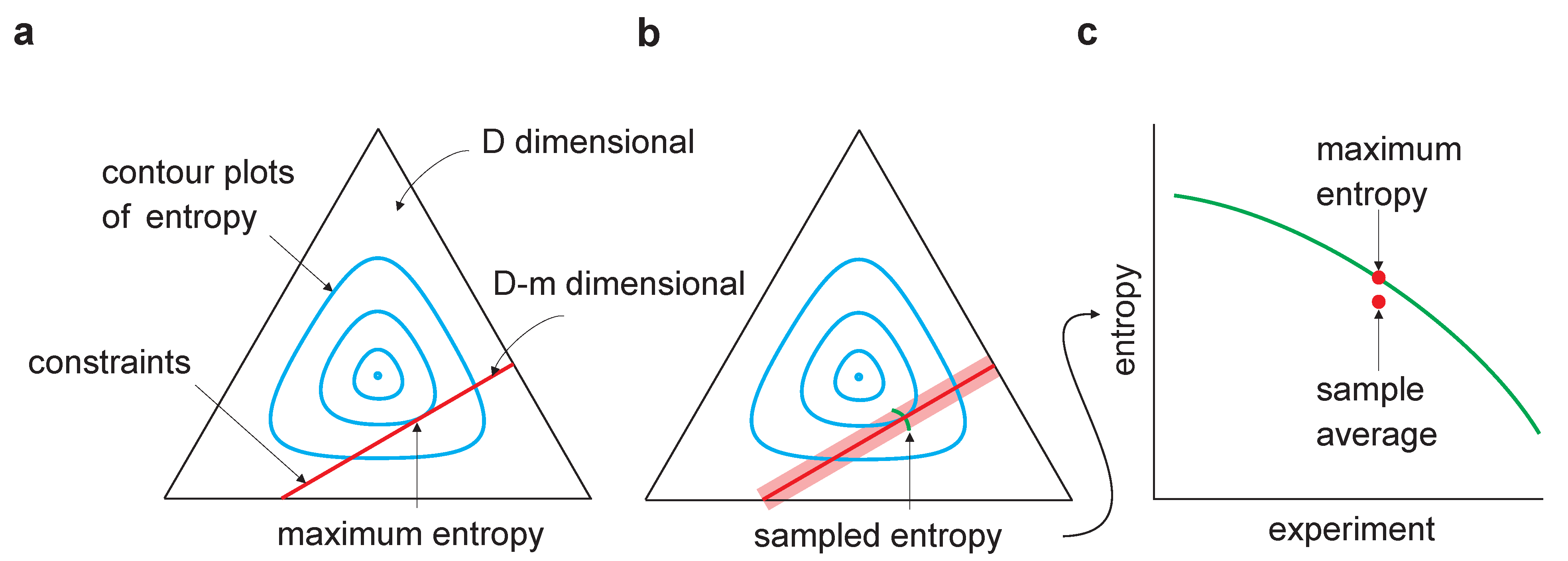

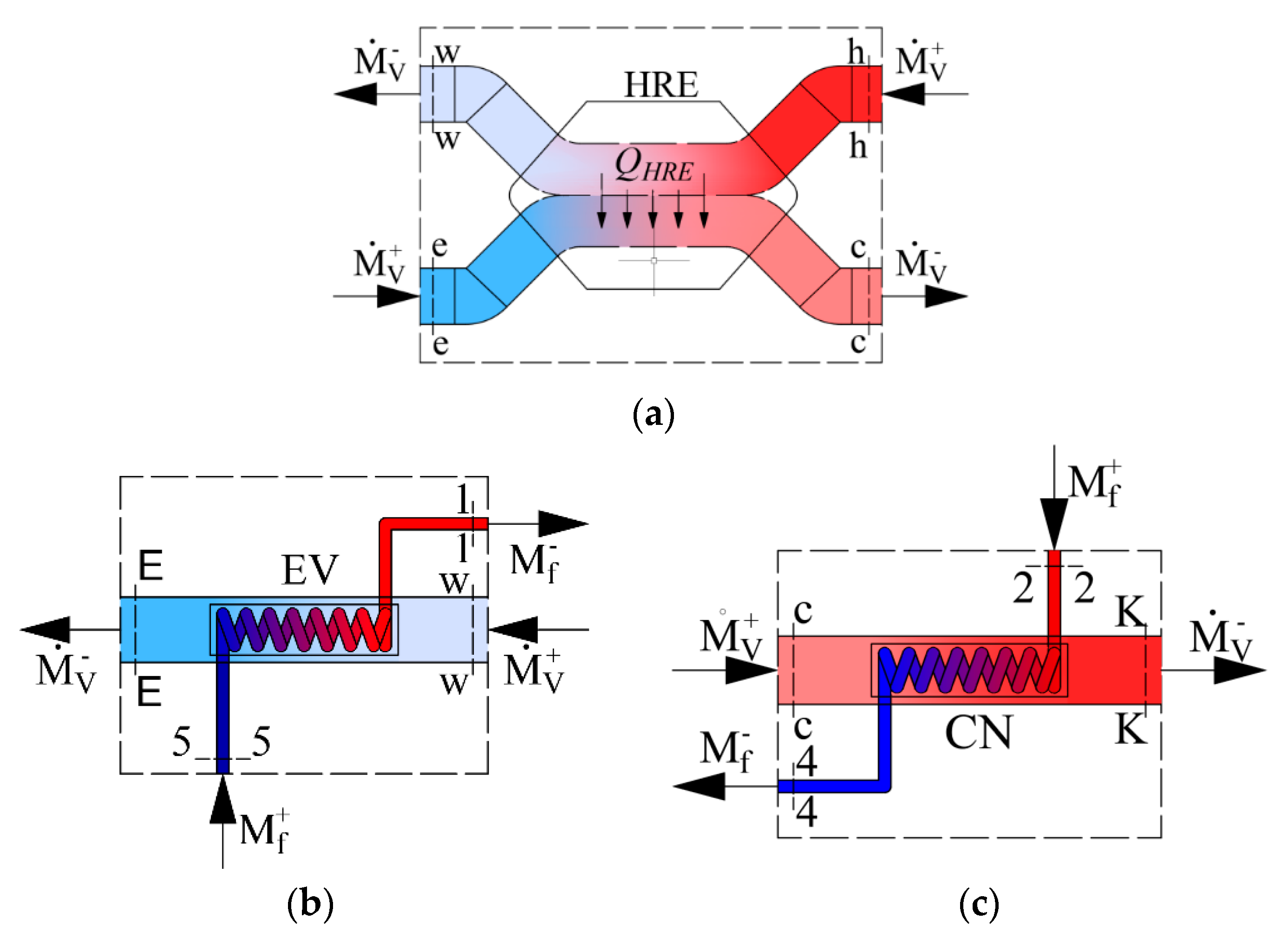

In thermodynamics, the tendency of an isolated system to move toward a state of higher disorder is known as the " arrow of time." Heat pumps and refrigerators are some of the most common examples. All real life engines perform irreversible processes. The entropy of the universe does increase due to irreversible processes. Irreversible - these processes can only be done in one direction. The gas is returned to its original state and no increase in entropy occurs. They consist of 2 adiabatic processes and 2 isothermal processes. A famous example of this is the carnot cycle. These processes do not increase the entropy of the universe: entropy stays constant. Reversible - these processes can be done forward or backwards. There are two types of thermodynamic processes we can think about based on entropy. Entropy at a point is the ratio between heat and temperature and is represented by the letter S. So the entropy or disorderness can either stay the same or increase.Ĭalculating entropy is not tested on the exam but we will learn briefly about it anyways in case you study it in college. The 2nd Law of Thermodynamics states that the entropy of the system and its surroundings will never decrease. The universe wants more disorder□ Entropy You can also define entropy as randomness or lack of predictability. Some people also define entropy as molecular freedom. That is the most surface level definition ever. This section might be one of the hardest to understand because it deals with intangible quantities, so just try your best□Įntropy is disorder. This section is heavy on theory and understanding of math rather than its application. But the conclusion follows, that both Shannon and differential entropy is unitless.In this section, we finally get to the 2nd law of Thermodynamics. That rises a problem, while $p(x)$ certainly is unitless, since probability is an absolute number, the density $f(x)$ measures probability pr unit of $x$, so if unit of $x$ is $\text$. Again, from general principles (see lognormal distribution, standard-deviation and (physical) units for discussion and references) the arguments of transcendental functions like $\log$ must be unitless. This leaves us with the unit of measurement of $\log p(x), \log f(x)$ respectively. Now, from general principles the unit of measurement of the expectation (mean, average) of a variable (random or not) is the same as the unit of measurement of the variable itself. Where $f$ is the probability density function of a continuous random variable. Where $p$ is the probability mass function of a discrete random variable. Some details: We treat Shannon (discrete) and differential (continuous) entropy separately. A measurement of length in meters can be written in decimal or binary, that does not really change the unit of measurement of length used. ) in the physical sense, it corresponds more to writing the measurement in decimal or binary number systems. But these are not really measurements units (like meter, kg. First, sometimes units are given as "nats" or "bits", for the cases of use of natural logs/binary logs, respectively. Still, there are some details to elaborate. Gave the answer in comments, entropy is unitless.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed